“Is it possible to recover the lost photos from my Mum’s iPhone 8 after after jailbreak or ROM flashing?” “Any ways to get back the deleted pictures from the iPhone 8 after clearing the trash bin?” In the above image, in the yellow mark, we see the output.“How can I do to restore the lost photos from my iPhone 8 after the iOS system upgrade without backups?” Then click on the Log tab then you will get the log details about the task here in the image below as you see the yellow marks, it says that it ran successfully. To check the log about the task, double click on the task. Then you click on dag file name the below window will open, as you have seen yellow mark line in the image we see in Treeview, graph view, Task Duration.etc., in the graph it will show what task dependency means, In the below image 1st dummy_task will run then after python_task runs. Before running the dag, please make sure that the airflow webserver and scheduler are running. To run the dag file from Web UI, follow these steps. When you create a file in the dags folder, it will automatically show in the UI. And it is your job to write the configuration and organize the tasks in specific orders to create a complete data pipeline. Instead, tasks are the element of Airflow that actually "do the work" we want to be performed. DAGs do not perform any actual computation. A DAG is just a Python file used to organize tasks and set their execution context.

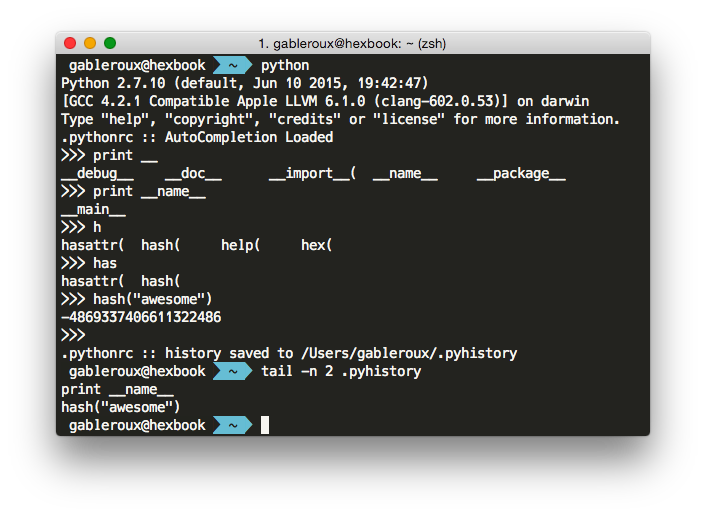

The above code lines explain that 1st dummy_task will run then after the python_task executes. Here are a few ways you can define dependencies between them: Here we are Setting up the dependencies or the order in which the tasks should be executed. Here in the code dummy_task,python_task are codes created by instantiating, and in python task, we call the python function to return output. Python_task = PythonOperator(task_id='python_task', python_callable=my_func, dag=dag_python) The next step is setting up the tasks which want all the tasks in the workflow.ĭummy_task = DummyOperator(task_id='dummy_task', retries=3, dag=dag_python) Note: Use schedule_interval=None and not schedule_interval='None' when you don't want to schedule your DAG. We can schedule by giving preset or cron format as you see in the table.ĭon't schedule use exclusively "externally triggered" once and only once an hour at the beginning of the hourĠ 0 * * once a week at midnight on Sunday morningĠ 0 * * once a month at midnight on the first day of the monthĠ 0 1 * once a year at midnight of January 1 # schedule_interval='0 0 * * case of python operator in airflow', Give the DAG name, configure the schedule, and set the DAG settings # If a task fails, retry it once after waiting

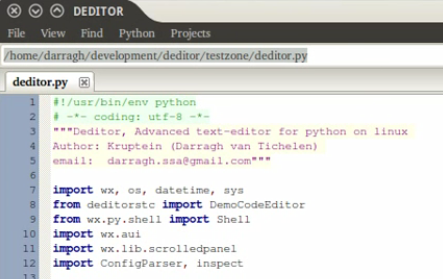

Here we are creating a simple python function and returning some output to the pythonOperator use case.ĭefine default and DAG-specific arguments Import Python dependencies needed for the workflowįrom import DummyOperatorįrom _operator import PythonOperator It is a straightforward but powerful operator, allowing you to execute a Python callable function from your DAG.Ĭreate a dag file in the /airflow/dags folder using the below commandĪfter creating the dag file in the dags folder, follow the below steps to write a dag file Step 1: Importing modules We create a function and return output using the python operator in the locale by scheduling. Here in this scenario, we will learn how to use the python operator in the airflow DAG. Install Ubuntu in the virtual machine click here.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed